Journal Description

Computation

Computation

is a peer-reviewed journal of computational science and engineering published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), CAPlus / SciFinder, Inspec, dblp, and other databases.

- Journal Rank: CiteScore - Q2 (Applied Mathematics)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18 days after submission; acceptance to publication is undertaken in 4.4 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.2 (2022);

5-Year Impact Factor:

2.2 (2022)

Latest Articles

Quasi-Interpolation on Chebyshev Grids with Boundary Corrections

Computation 2024, 12(5), 100; https://doi.org/10.3390/computation12050100 - 13 May 2024

Abstract

Quasi-interpolation is a powerful tool for approximating functions using radial basis functions (RBFs) such as Gaussian kernels. This avoids solving large systems of equations as in RBF interpolation. However, quasi-interpolation with Gaussian kernels on compact intervals can have significant errors near the boundaries.

[...] Read more.

Quasi-interpolation is a powerful tool for approximating functions using radial basis functions (RBFs) such as Gaussian kernels. This avoids solving large systems of equations as in RBF interpolation. However, quasi-interpolation with Gaussian kernels on compact intervals can have significant errors near the boundaries. This paper proposes a quasi-interpolation method with Gaussian kernels using Chebyshev points and boundary corrections to improve the approximation near the boundaries. The boundary corrections use a linear approximation of the function beyond the interval to estimate the truncation error and add correction terms. Numerical studies on test functions show that the proposed method reduces errors significantly near boundaries compared to quasi-interpolation without corrections, for both equally spaced and Chebyshev points. The convergence and accuracy with the boundary corrections are generally better with Chebyshev points compared to equally spaced points. The proposed method provides an efficient way to perform quasi-interpolation on compact intervals while controlling the boundary errors. This study introduces a novel approach to quasi-interpolation modification, which significantly enhances convergence rates and minimizes errors at boundary points, thereby advancing the methods for boundary approximation.

Full article

(This article belongs to the Topic Mathematical Modeling)

►

Show Figures

Open AccessArticle

Intraplatelet Calcium Signaling Regulates Thrombus Growth under Flow: Insights from a Multiscale Model

by

Anass Bouchnita and Vitaly Volpert

Computation 2024, 12(5), 99; https://doi.org/10.3390/computation12050099 (registering DOI) - 12 May 2024

Abstract

In injured arteries, platelets adhere to the subendothelium and initiate the coagulation process. They recruit other platelets and form a plug that stops blood leakage. The formation of the platelet plug depends on platelet activation, a process that is regulated by intracellular calcium

[...] Read more.

In injured arteries, platelets adhere to the subendothelium and initiate the coagulation process. They recruit other platelets and form a plug that stops blood leakage. The formation of the platelet plug depends on platelet activation, a process that is regulated by intracellular calcium signaling. Using an improved version of a previous multiscale model, we study the effects of changes in calcium signaling on thrombus growth. This model utilizes the immersed boundary method to capture the interplay between platelets and the flow. Each platelet can attach to other platelets, become activated, express proteins on its surface, detach, and/or become non-adhesive. Platelet activation is captured through a specific calcium signaling model that is solved at the intracellular level, which considers calcium activation by agonists and contacts. Simulations reveal a contact-dependent activation threshold necessary for the formation of the thrombus core. Next, we evaluate the effect of knocking out the P2Y and PAR receptor families. Further, we show that blocking P2Y receptors reduces platelet numbers in the shell while slightly increasing the core size. An analysis of the contribution of P2Y and PAR activation to intraplatelet calcium signaling reveals that each of the ADP and thrombin agonists promotes the activation of platelets in different regions of the thrombus. Finally, the model predicts that the heterogeneity in platelet size reduces the overall number of platelets recruited by the thrombus. The presented framework can be readily used to study the effect of antiplatelet therapy under different physiological and pathological blood flow, platelet count, and activation conditions.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Biology)

Open AccessArticle

An Optimization Model for Flight Rescheduling from an Airport’s Centralized Perspective for Better Management of Demand and Capacity Utilization

by

Abbas Seifi, Kumaraswamy Ponnambalam, Anna Kudiakova and Lisa Aultman-Hall

Computation 2024, 12(5), 98; https://doi.org/10.3390/computation12050098 (registering DOI) - 11 May 2024

Abstract

Over-capacity flight scheduling by commercial airlines due to the surging demand in recent years creates congestion and significant delays at major airports. This attitude towards maximizing throughput calls for tactical flight rescheduling to comply with airports’ capacity limitations and distribute the peak hour

[...] Read more.

Over-capacity flight scheduling by commercial airlines due to the surging demand in recent years creates congestion and significant delays at major airports. This attitude towards maximizing throughput calls for tactical flight rescheduling to comply with airports’ capacity limitations and distribute the peak hour demand over the course of a day. Such displacements of flights may cause significant problems and costs for airlines and some cancellations or missed connections for passengers. This paper presents an optimization model for flight rescheduling at a schedule-coordinated airport to minimize congestion and flight delays at peak hours. The optimization model is used to make better scheduling intervention decisions considering airport resource constraints and safety of operation. A simulation algorithm is also developed to replicate arrival and departure processes in such an airport. The simulation adheres to a first come first served (FCFS) discipline and enforces runway capacity constraints and minimum turnaround times. We compare the delays caused by an ad hoc FCFS operation with those of the optimization model. Computational results from a case study demonstrate that a reduction of 52.6% and 61% in total delay times for arrival and departure flights, respectively, can be achieved. The optimization model also facilitates the implementation of a collaborative decision-making system for better coordination of airport traffic flow management with commercial airlines.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessReview

Review of Modeling Approaches for Conjugate Heat Transfer Processes in Oil-Immersed Transformers

by

Ivan Smolyanov, Evgeniy Shmakov, Denis Butusov and Alexandra I. Khalyasmaa

Computation 2024, 12(5), 97; https://doi.org/10.3390/computation12050097 (registering DOI) - 11 May 2024

Abstract

►▼

Show Figures

This review addresses the modeling approaches for heat transfer processes in oil-immersed transformer. Electromagnetic, thermal, and hydrodynamic thermal fields are identified as the most critical aspects in describing the state of the transformer. The paper compares the implementation complexity, calculation time, and details

[...] Read more.

This review addresses the modeling approaches for heat transfer processes in oil-immersed transformer. Electromagnetic, thermal, and hydrodynamic thermal fields are identified as the most critical aspects in describing the state of the transformer. The paper compares the implementation complexity, calculation time, and details of the results for different approaches to creating a mathematical model, such as circuit-based models and finite element and finite volume methods. Examples of successful model implementation are provided, along with the features of oil-immersed transformer modeling. In addition, the review considers the strengths and limitations of the considered models in relation to creating a digital twin of a transformer. The review concludes that it is not feasible to create a universal model that accounts for all the features of physical processes in an oil-immersed transformer, operates in real time for a digital twin, and provides the required accuracy at the same time. The conducted research shows that joint modeling of electromagnetic and thermal processes, reducing the dimensionality of models, provides the most comprehensive solution to the problem.

Full article

Figure 1

Open AccessArticle

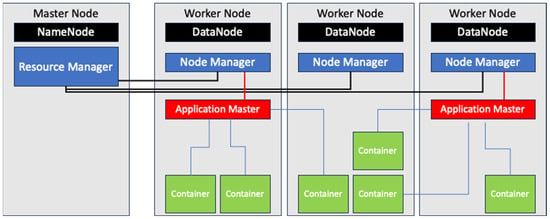

Optimizing Hadoop Scheduling in Single-Board-Computer-Based Heterogeneous Clusters

by

Basit Qureshi

Computation 2024, 12(5), 96; https://doi.org/10.3390/computation12050096 - 9 May 2024

Abstract

Single-board computers (SBCs) are emerging as an efficient and economical solution for fog and edge computing, providing localized big data processing with lower energy consumption. Newer and faster SBCs deliver improved performance while still maintaining a compact form factor and cost-effectiveness. In recent

[...] Read more.

Single-board computers (SBCs) are emerging as an efficient and economical solution for fog and edge computing, providing localized big data processing with lower energy consumption. Newer and faster SBCs deliver improved performance while still maintaining a compact form factor and cost-effectiveness. In recent times, researchers have addressed scheduling issues in Hadoop-based SBC clusters. Despite their potential, traditional Hadoop configurations struggle to optimize performance in heterogeneous SBC clusters due to disparities in computing resources. Consequently, we propose modifications to the scheduling mechanism to address these challenges. In this paper, we leverage the use of node labels introduced in Hadoop 3+ and define a Frugality Index that categorizes and labels SBC nodes based on their physical capabilities, such as CPU, memory, disk space, etc. Next, an adaptive configuration policy modifies the native fair scheduling policy by dynamically adjusting resource allocation in response to workload and cluster conditions. Furthermore, the proposed frugal configuration policy considers prioritizing the reduced tasks based on the Frugality Index to maximize parallelism. To evaluate our proposal, we construct a 13-node SBC cluster and conduct empirical evaluation using the Hadoop CPU and IO intensive microbenchmarks. The results demonstrate significant performance improvements compared to native Hadoop FIFO and capacity schedulers, with execution times 56% and 22% faster than the best_cap and best_fifo scenarios. Our findings underscore the effectiveness of our approach in managing the heterogeneous nature of SBC clusters and optimizing performance across various hardware configurations.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Analysis and Control of Partially Observed Discrete-Event Systems via Positively Constructed Formulas

by

Artem Davydov, Aleksandr Larionov and Nadezhda Nagul

Computation 2024, 12(5), 95; https://doi.org/10.3390/computation12050095 - 9 May 2024

Abstract

This paper establishes a connection between control theory for partially observed discrete-event systems (DESs) and automated theorem proving (ATP) in the calculus of positively constructed formulas (PCFs). The language of PCFs is a complete first-order language providing a powerful tool for qualitative analysis

[...] Read more.

This paper establishes a connection between control theory for partially observed discrete-event systems (DESs) and automated theorem proving (ATP) in the calculus of positively constructed formulas (PCFs). The language of PCFs is a complete first-order language providing a powerful tool for qualitative analysis of dynamical systems. Based on ATP in the PCF calculus, a new technique is suggested for checking observability as a property of formal languages, which is necessary for the existence of supervisory control of DESs. In the case of violation of observability, words causing a conflict can also be extracted with the help of a specially designed PCF. With an example of the problem of path planning by a robot in an unknown environment, we show the application of our approach at one of the levels of a robot control system. The prover Bootfrost developed to facilitate PCF refutation is also presented. The tests show positive results and perspectives for the presented approach.

Full article

(This article belongs to the Special Issue Advanced Information, Computation, and Control Systems for Distributed Environments II)

►▼

Show Figures

Figure 1

Open AccessArticle

To Bind or Not to Bind? A Comprehensive Characterization of TIR1 and Auxins Using Consensus In Silico Approaches

by

Fernando D. Prieto-Martínez, Jennifer Mendoza-Cañas and Karina Martínez-Mayorga

Computation 2024, 12(5), 94; https://doi.org/10.3390/computation12050094 - 9 May 2024

Abstract

Auxins are chemical compounds of wide interest, mostly due to their role in plant metabolism and development. Synthetic auxins have been used as herbicides for more than 75 years and low toxicity in humans is one of their most advantageous features. Extensive studies

[...] Read more.

Auxins are chemical compounds of wide interest, mostly due to their role in plant metabolism and development. Synthetic auxins have been used as herbicides for more than 75 years and low toxicity in humans is one of their most advantageous features. Extensive studies of natural and synthetic auxins have been made in an effort to understand their role in plant growth. However, molecular details of the binding and recognition process are still an open question. Herein, we present a comprehensive in silico pipeline for the assessment of TIR1 ligands using several structure-based methods. Our results suggest that subtle dynamics within the binding pocket arise from water–ligand interactions. We also show that this trait distinguishes effective binders. Finally, we construct a database of putative ligands and decoy compounds, which can aid further studies focusing on synthetic auxin design. To the best of our knowledge, this study is the first of its kind focusing on TIR1.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Chemistry)

►▼

Show Figures

Figure 1

Open AccessArticle

Elasto-Plastic Analysis of Two-Way Reinforced Concrete Slabs Strengthened with Carbon Fiber Reinforced Polymer Laminates

by

Zahraa Saleem Sharhan and Majid Movahedi Rad

Computation 2024, 12(5), 93; https://doi.org/10.3390/computation12050093 - 8 May 2024

Abstract

This study explores a technique for enhancing the punching strength of reinforced concrete (RC) flat slabs, namely carbon fiber reinforced polymer (CFRP). Four large-scale RC flat slabs were fabricated, to assess the efficacy of this strengthening method. One slab served as a reference

[...] Read more.

This study explores a technique for enhancing the punching strength of reinforced concrete (RC) flat slabs, namely carbon fiber reinforced polymer (CFRP). Four large-scale RC flat slabs were fabricated, to assess the efficacy of this strengthening method. One slab served as a reference and the three other specimens were strengthened with CFRP, as a method of external strengthening. These slabs, featuring identical overall dimensions and flexural steel reinforcement, underwent testing until failure, under the influence of concentrated patch loads. A concrete plastic damage constitutive model (CDP) was developed and employed to examine the strength of two-way RC slabs. Additionally, to enhance the strength of existing RC slabs, carbon fiber reinforced polymer (CFRP) strips are affixed to the tension surface of the sections. The research begins with the calibration of a numerical model, based on data from laboratory tests. The objective of this study is to constrain the plastic behavior of two-way RC slabs reinforced with CFRP, with a focus on establishing an optimal elasto-plastic analysis, aimed at controlling concrete damage plasticity using CFRP, and employing a plastic limit load multiplier. Subsequently, a series of numerical simulations, incorporating different variables, are conducted to investigate shear behavior. The numerical results indicate that an increase in the strengthening ratio has a significant impact on shear strength. Finite element simulations are carried out using Abaqus CAE®/2018.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Numerical Estimation of Nonlinear Thermal Conductivity of SAE 1020 Steel

by

Ariel Flores Monteiro de Oliveira, Elisan dos Santos Magalhães, Kahl Dick Zilnyk, Philippe Le Masson and Ernandes José Gonçalves do Nascimento

Computation 2024, 12(5), 92; https://doi.org/10.3390/computation12050092 - 4 May 2024

Abstract

Thermally characterizing high-thermal conductivity materials is challenging, especially considering high temperatures. However, the modeling of heat transfer processes requires specific material information. The present study addresses an inverse approach to estimate the thermal conductivity of SAE 1020 relative to temperature during an autogenous

[...] Read more.

Thermally characterizing high-thermal conductivity materials is challenging, especially considering high temperatures. However, the modeling of heat transfer processes requires specific material information. The present study addresses an inverse approach to estimate the thermal conductivity of SAE 1020 relative to temperature during an autogenous LASER Beam Welding (LBW) experiment. The temperature profile during LBW is computed with the aid of an in-house CUDA-C algorithm. Here, the governing three-dimensional heat diffusion equation is discretized through the Finite Volume Method (FVM) and solved using the Successive Over-Relaxation (SOR) parallelized iterative solver. With temperature information, one may employ a minimization procedure to assess thermal properties or process parameters. In this work, the Quadrilateral Optimization Method (QOM) is applied to perform estimations because it allows for the simultaneous optimization of variables with no quantity restriction and renders the assessment of parameters in unsteady states valid, thereby preventing the requirement for steady-state experiments. We extended QOM’s prior applicability to account for more parameters concurrently. In Case I, the optimization of the three parameters that compose the second-degree polynomial function model of thermal conductivity is performed. In Case II, the heat distribution model’s gross heat rate (Ω) is also estimated in addition to the previous parameters. Ω [W] quantifies the power the sample receives and is related to the process’s efficiency. The method’s suitability for estimating the parameters was confirmed by investigating the reduced sensitivity coefficients, while the method’s stability was corroborated by performing the estimates with noisy data. There is a good agreement between the reference and estimated values. Hence, this study introduces a proper methodology for estimating a temperature-dependent thermal property and an LBW parameter. As the performance of the present algorithm is increased using parallel computation, a pondered solution between estimation reliability and computational cost is achieved.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Heat and Mass Transfer (ICCHMT 2023))

►▼

Show Figures

Figure 1

Open AccessArticle

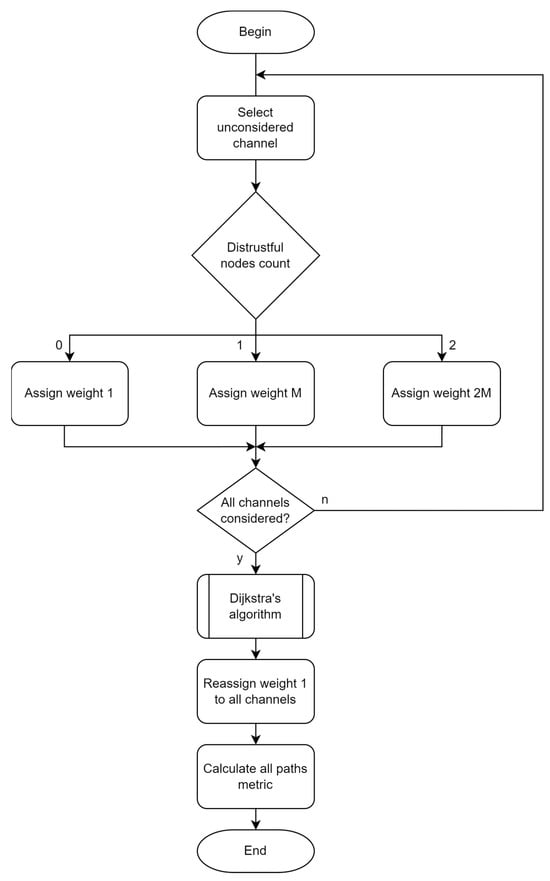

Minimizing the Number of Distrustful Nodes on the Path of IP Packet Transmission

by

Kvitoslava Obelovska, Oleksandr Tkachuk and Yaromyr Snaichuk

Computation 2024, 12(5), 91; https://doi.org/10.3390/computation12050091 - 3 May 2024

Abstract

One of the important directions for improving modern Wide Area Networks is efficient and secure packet routing. Efficient routing is often based on using the shortest paths, while ensuring security involves preventing the possibility of packet interception. The work is devoted to improving

[...] Read more.

One of the important directions for improving modern Wide Area Networks is efficient and secure packet routing. Efficient routing is often based on using the shortest paths, while ensuring security involves preventing the possibility of packet interception. The work is devoted to improving the security of data transmission in IP networks. A new approach is proposed to minimize the number of distrustful nodes on the path of IP packet transmission. By a distrustful node, we mean a node that works correctly in terms of hardware and software and fully implements its data transport functions, but from the point of view of its organizational subordination, we are not sure that the node will not violate security rules to prevent unauthorized access and interception of data. A distrustful node can be either a transit or an end node. To implement this approach, we modified Dijkstra’s shortest path tree construction algorithm. The modified algorithm ensures that we obtain a path that will pass only through trustful nodes, if such a path exists. If there is no such path, the path will have the minimum possible number of distrustful intermediate nodes. The number of intermediate nodes in the path was used as a metric to obtain the shortest path trees. Routing tables of routers, built on the basis of trees obtained using a modified algorithm, provide increased security of data transmission, minimizing the use of distrustful nodes.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Selection of Appropriate Criteria for Optimization of Ventilation Element for Protective Clothing Using a Numerical Approach

by

Sanjay Rajni Vejanand, Alexander Janushevskis and Ivo Vaicis

Computation 2024, 12(5), 90; https://doi.org/10.3390/computation12050090 - 2 May 2024

Abstract

While there are multiple methods to ventilate protective clothing, there is still room for improvement. In our research, we are using ventilation elements that are positioned at the ventilation holes in the air space between the body and clothing. These ventilation elements allow

[...] Read more.

While there are multiple methods to ventilate protective clothing, there is still room for improvement. In our research, we are using ventilation elements that are positioned at the ventilation holes in the air space between the body and clothing. These ventilation elements allow air to flow freely while preventing sun radiation, rain drops, and insects from directly accessing the body. Therefore, the shape of the ventilation element is crucial. This led us to study the shape optimization of ventilation elements through the utilization of metamodels and numerical approaches. In order to accomplish the objective, it is crucial to thoroughly evaluate and choose suitable criteria for the optimization process. We know from prior research that the toroidal cut-out shape element provides better results. In a previous study, we optimized the shape of this element based on the minimum pressure difference as a criterion. In this study, we are using different criteria for the shape optimization of ventilation elements to determine which are most effective. This study involves a metamodeling strategy that utilizes local and global approximations with different order polynomials, as well as Kriging approximations, for the purpose of optimizing the geometry of ventilation elements. The goal was achieved by a sequential process. (1) Planning the position of control points of Non-Uniform Rational B-Splines (NURBS) in order to generate elements with a smooth shape. (2) Constructing geometric CAD models based on the design of experiments. (3) Compute detailed model solutions using SolidWorks Flow Simulation. (4) Developing metamodels for responses using computer experiments. (5) Optimization of element shape using metamodels. The procedure is repeated for six criteria, and subsequently, the results are compared to determine the most efficient criteria for optimizing the design of the ventilation element.

Full article

(This article belongs to the Section Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Analysis of a Novel Method for Generating 3D Mesh at Contact Points in Packed Beds

by

Daniel F. Szambien, Maximilian R. Ziegler, Christoph Ulrich and Roland Scharf

Computation 2024, 12(5), 89; https://doi.org/10.3390/computation12050089 - 30 Apr 2024

Abstract

This study comprehensively analyzes the impact of the novel HybridBridge method, developed by the authors, for generating a 3D mesh at contact points within packed beds within the effective thermal conductivity. It compares HybridBridge with alternative methodologies, highlights its superiority and outlines potential

[...] Read more.

This study comprehensively analyzes the impact of the novel HybridBridge method, developed by the authors, for generating a 3D mesh at contact points within packed beds within the effective thermal conductivity. It compares HybridBridge with alternative methodologies, highlights its superiority and outlines potential applications. The HybridBridge employs two independent geometry parameters to facilitate optimal flow mapping while maintaining physically accurate effective thermal conductivity and ensuring high mesh quality. A method is proposed to estimate the HybridBridge radius for a defined packed bed and cap height, enabling a presimulative determination of a suitable radius. Numerical analysis of a body-centered-cubic unit cell with varied HybridBridges is conducted alongside previous simulations involving a simple-cubic unit cell. Additionally, a physically based resistance model is introduced, delineating effective thermal conductivity as a function of the HybridBridge geometry and porosity. An equation for the HybridBridge radius, tailored to simulation parameters, is derived. Comparison with the unit cells and a randomly packed bed reveals an acceptable average deviation between the calculated and utilized radii, thereby streamlining and refining the implementation of the HybridBridge methodology.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Heat and Mass Transfer (ICCHMT 2023))

►▼

Show Figures

Figure 1

Open AccessArticle

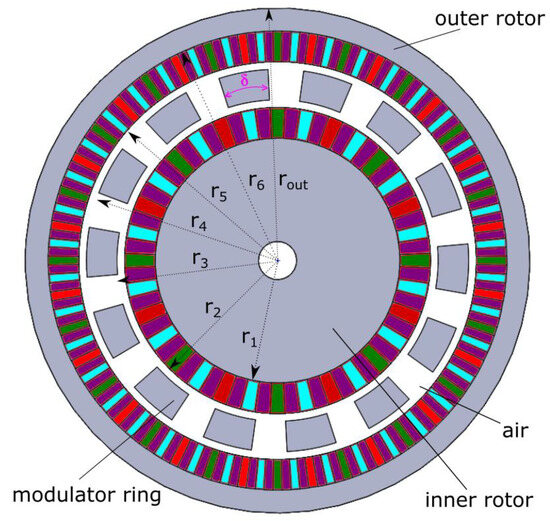

Torque Calculation and Dynamical Response in Halbach Array Coaxial Magnetic Gears through a Novel Analytical 2D Model

by

Panteleimon Tzouganakis, Vasilios Gakos, Christos Kalligeros, Christos Papalexis, Antonios Tsolakis and Vasilios Spitas

Computation 2024, 12(5), 88; https://doi.org/10.3390/computation12050088 - 27 Apr 2024

Abstract

►▼

Show Figures

Coaxial magnetic gears have piqued the interest of researchers due to their numerous benefits over mechanical gears. These include reduced noise and vibration, enhanced efficiency, lower maintenance costs, and improved backdrivability. However, their adoption in industry has been limited by drawbacks like lower

[...] Read more.

Coaxial magnetic gears have piqued the interest of researchers due to their numerous benefits over mechanical gears. These include reduced noise and vibration, enhanced efficiency, lower maintenance costs, and improved backdrivability. However, their adoption in industry has been limited by drawbacks like lower torque density and slippage at high torque levels. This work presents an analytical 2D model to compute the magnetic potential in Halbach array coaxial magnetic gears for every rotational angle, geometry configuration, and magnet specifications. This model calculates the induced torques and torque ripple in both rotors using the Maxwell Stress Tensor. The results were confirmed through Finite Element Analysis (FEA). Unlike FEA, this analytical model directly produces harmonics values, leading to faster computational times as it avoids torque calculations at each time step. In a case study, a standard coaxial magnetic gear was compared to one with a Halbach array, revealing a 14.3% improvement in torque density and a minor reduction in harmonics that cause torque ripple. Additionally, a case study was conducted to examine slippage in both standard and Halbach array gears during transient operations. The Halbach array coaxial magnetic gear demonstrated a 13.5% lower transmission error than its standard counterpart.

Full article

Figure 1

Open AccessArticle

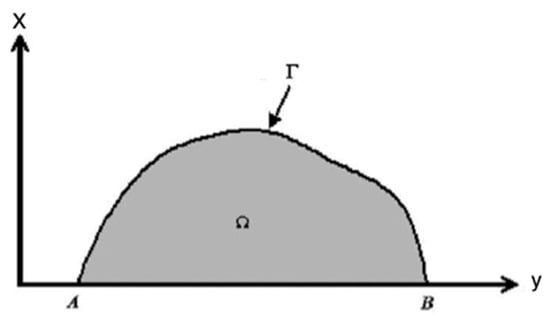

BEM Modeling for Stress Sensitivity of Nonlocal Thermo-Elasto-Plastic Damage Problems

by

Mohamed Abdelsabour Fahmy

Computation 2024, 12(5), 87; https://doi.org/10.3390/computation12050087 - 23 Apr 2024

Abstract

The main objective of this paper is to propose a new boundary element method (BEM) modeling for stress sensitivity of nonlocal thermo-elasto-plastic damage problems. The numerical solution of the heat conduction equation subjected to a non-local condition is described using a boundary element

[...] Read more.

The main objective of this paper is to propose a new boundary element method (BEM) modeling for stress sensitivity of nonlocal thermo-elasto-plastic damage problems. The numerical solution of the heat conduction equation subjected to a non-local condition is described using a boundary element model. The total amount of heat energy contained inside the solid under consideration is specified by the non-local condition. The procedure of solving the heat equation will reveal an unknown control function that governs the temperature on a specific region of the solid’s boundary. The initial stress BEM for structures with strain-softening damage is employed in a boundary element program with iterations in each load increment to develop a plasticity model with yield limit deterioration. To avoid the difficulties associated with the numerical calculation of singular integrals, the regularization technique is applicable to integral operators. To validate the physical correctness and efficiency of the suggested formulation, a numerical case is solved.

Full article

(This article belongs to the Topic Advances in Computational Materials Sciences)

►▼

Show Figures

Figure 1

Open AccessArticle

Manufacture of Microstructured Optical Fibers: Problem of Optimal Control of Silica Capillary Drawing Process

by

Daria Vladimirova, Vladimir Pervadchuk and Yuri Konstantinov

Computation 2024, 12(5), 86; https://doi.org/10.3390/computation12050086 - 23 Apr 2024

Abstract

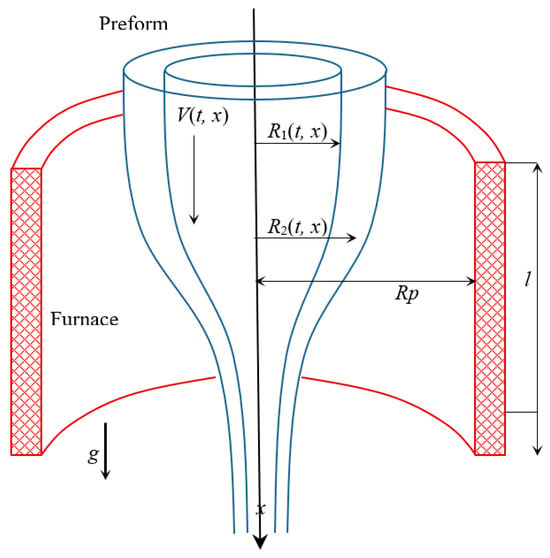

The effective control of any technological process is essential in ensuring high-quality finished products. This is particularly true in manufacturing knowledge-intensive and high-tech products, including microstructured photonic crystal fibers (PCF). This paper addresses the issues of stabilizing the optimal control of the silica

[...] Read more.

The effective control of any technological process is essential in ensuring high-quality finished products. This is particularly true in manufacturing knowledge-intensive and high-tech products, including microstructured photonic crystal fibers (PCF). This paper addresses the issues of stabilizing the optimal control of the silica capillary drawing process. The silica capillaries are the main components of PCF. A modified mathematical model proposed by the authors is used as the basic model of capillary drawing. The uniqueness of this model is that it takes into account the main forces acting during drawing (gravity, inertia, viscosity, surface tension, pressure inside the drawn capillary), as well as all types of heat transfer (heat conduction, convection, radiation). In the first stage, the system of partial differential equations describing heat and mass transfer was linearized. Then, the problem of the optimal control of the drawing process was formulated, and optimization systems for the isothermal and non-isothermal cases were obtained. In the isothermal case, optimal adjustments of the drawing speed were obtained for different objective functionals. Thus, the proposed approach allows for the constant monitoring and adjustment of the observed state parameters (for example, the outer radius of the capillary). This is possible due to the optimal control of the drawing speed to obtain high-quality preforms. The ability to control and promptly eliminate geometric defects in the capillary was confirmed by the analysis of the numerical calculations, according to which even 15% deviations in the outer radius of the capillary during the drawing process can be reduced to 4–5% by controlling only the capillary drawing speed.

Full article

(This article belongs to the Special Issue Mathematical Modeling and Study of Nonlinear Dynamic Processes)

►▼

Show Figures

Figure 1

Open AccessArticle

Detecting Overlapping Communities Based on Influence-Spreading Matrix and Local Maxima of a Quality Function

by

Vesa Kuikka

Computation 2024, 12(4), 85; https://doi.org/10.3390/computation12040085 - 22 Apr 2024

Abstract

Community detection is a widely studied topic in network structure analysis. We propose a community detection method based on the search for the local maxima of an objective function. This objective function reflects the quality of candidate communities in the network structure. The

[...] Read more.

Community detection is a widely studied topic in network structure analysis. We propose a community detection method based on the search for the local maxima of an objective function. This objective function reflects the quality of candidate communities in the network structure. The objective function can be constructed from a probability matrix that describes interactions in a network. Different models, such as network structure models and network flow models, can be used to build the probability matrix, and it acts as a link between network models and community detection models. In our influence-spreading model, the probability matrix is called an influence-spreading matrix, which describes the directed influence between all pairs of nodes in the network. By using the local maxima of an objective function, our method can standardise and help in comparing different definitions and approaches of community detection. Our proposed approach can detect overlapping and hierarchical communities and their building blocks within a network. To compare different structures in the network, we define a cohesion measure. The objective function can be expressed as a sum of these cohesion measures. We also discuss the probability of community formation to analyse a different aspect of group behaviour in a network. It is essential to recognise that this concept is separate from the notion of community cohesion, which emphasises the need for varying objective functions in different applications. Furthermore, we demonstrate that normalising objective functions by the size of detected communities can alter their rankings.

Full article

(This article belongs to the Special Issue Computational Social Science and Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

A Weighted and Epsilon-Constraint Biased-Randomized Algorithm for the Biobjective TOP with Prioritized Nodes

by

Lucia Agud-Albesa, Neus Garrido, Angel A. Juan, Almudena Llorens and Sandra Oltra-Crespo

Computation 2024, 12(4), 84; https://doi.org/10.3390/computation12040084 - 20 Apr 2024

Abstract

This paper addresses a multiobjective version of the Team Orienteering Problem (TOP). The TOP focuses on selecting a subset of customers for maximum rewards while considering time and fleet size constraints. This study extends the TOP by considering two objectives: maximizing total rewards

[...] Read more.

This paper addresses a multiobjective version of the Team Orienteering Problem (TOP). The TOP focuses on selecting a subset of customers for maximum rewards while considering time and fleet size constraints. This study extends the TOP by considering two objectives: maximizing total rewards from customer visits and maximizing visits to prioritized nodes. The MultiObjective TOP (MO-TOP) is formulated mathematically to concurrently tackle these objectives. A multistart biased-randomized algorithm is proposed to solve MO-TOP, integrating exploration and exploitation techniques. The algorithm employs a constructive heuristic defining biefficiency to select edges for routing plans. Through iterative exploration from various starting points, the algorithm converges to high-quality solutions. The Pareto frontier for the MO-TOP is generated using the weighted method, epsilon-constraint method, and Epsilon-Modified Method. Computational experiments validate the proposed approach’s effectiveness, illustrating its ability to generate diverse and high-quality solutions on the Pareto frontier. The algorithms demonstrate the ability to optimize rewards and prioritize node visits, offering valuable insights for real-world decision making in team orienteering applications.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

An Implementation of LASER Beam Welding Simulation on Graphics Processing Unit Using CUDA

by

Ernandes Nascimento, Elisan Magalhães, Arthur Azevedo, Luiz E. S. Paes and Ariel Oliveira

Computation 2024, 12(4), 83; https://doi.org/10.3390/computation12040083 - 17 Apr 2024

Cited by 1

Abstract

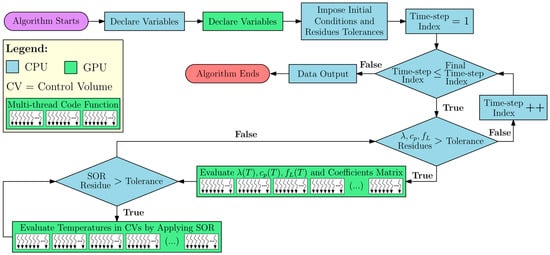

The maximum number of parallel threads in traditional CFD solutions is limited by the Central Processing Unit (CPU) capacity, which is lower than the capabilities of a modern Graphics Processing Unit (GPU). In this context, the GPU allows for simultaneous processing of several

[...] Read more.

The maximum number of parallel threads in traditional CFD solutions is limited by the Central Processing Unit (CPU) capacity, which is lower than the capabilities of a modern Graphics Processing Unit (GPU). In this context, the GPU allows for simultaneous processing of several parallel threads with double-precision floating-point formatting. The present study was focused on evaluating the advantages and drawbacks of implementing LASER Beam Welding (LBW) simulations using the CUDA platform. The performance of the developed code was compared to that of three top-rated commercial codes executed on the CPU. The unsteady three-dimensional heat conduction Partial Differential Equation (PDE) was discretized in space and time using the Finite Volume Method (FVM). The Volumetric Thermal Capacitor (VTC) approach was employed to model the melting-solidification. The GPU solutions were computed using a CUDA-C language in-house code, running on a Gigabyte Nvidia GeForce RTX™ 3090 video card and an MSI 4090 video card (both made in Hsinchu, Taiwan), each with 24 GB of memory. The commercial solutions were executed on an Intel® Core™ i9-12900KF CPU (made in Hillsboro, Oregon, United States of America) with a 3.6 GHz base clock and 16 cores. The results demonstrated that GPU and CPU processing achieve similar precision, but the GPU solution exhibited significantly faster speeds and greater power efficiency, resulting in speed-ups ranging from 75.6 to 1351.2 times compared to the CPU solutions. The in-house code also demonstrated optimized memory usage, with an average of 3.86 times less RAM utilization. Therefore, adopting parallelized algorithms run on GPU can lead to reduced CFD computational costs compared to traditional codes while maintaining high accuracy.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Heat and Mass Transfer (ICCHMT 2023))

►▼

Show Figures

Figure 1

Open AccessArticle

Graph-Based Interpretability for Fake News Detection through Topic- and Propagation-Aware Visualization

by

Kayato Soga, Soh Yoshida and Mitsuji Muneyasu

Computation 2024, 12(4), 82; https://doi.org/10.3390/computation12040082 - 15 Apr 2024

Abstract

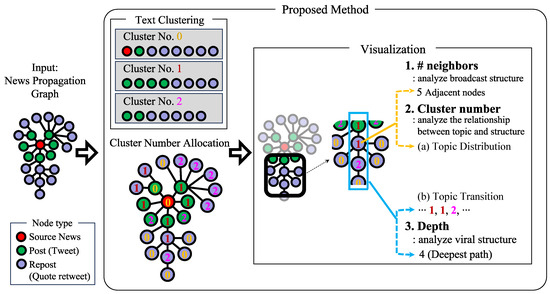

In the context of the increasing spread of misinformation via social network services, in this study, we addressed the critical challenge of detecting and explaining the spread of fake news. Early detection methods focused on content analysis, whereas recent approaches have exploited the

[...] Read more.

In the context of the increasing spread of misinformation via social network services, in this study, we addressed the critical challenge of detecting and explaining the spread of fake news. Early detection methods focused on content analysis, whereas recent approaches have exploited the distinctive propagation patterns of fake news to analyze network graphs of news sharing. However, these accurate methods lack accountability and provide little insight into the reasoning behind their classifications. We aimed to fill this gap by elucidating the structural differences in the spread of fake and real news, with a focus on opinion consensus within these structures. We present a novel method that improves the interpretability of graph-based propagation detectors by visualizing article topics and propagation structures using BERTopic for topic classification and analyzing the effect of topic agreement on propagation patterns. By applying this method to a real-world dataset and conducting a comprehensive case study, we not only demonstrated the effectiveness of the method in identifying characteristic propagation paths but also propose new metrics for evaluating the interpretability of the detection methods. Our results provide valuable insights into the structural behavior and patterns of news propagation, contributing to the development of more transparent and explainable fake news detection systems.

Full article

(This article belongs to the Special Issue Computational Social Science and Complex Systems)

►▼

Show Figures

Figure 1

Open AccessArticle

Air–Water Two-Phase Flow Dynamics Analysis in Complex U-Bend Systems through Numerical Modeling

by

Ergin Kükrer and Nurdil Eskin

Computation 2024, 12(4), 81; https://doi.org/10.3390/computation12040081 - 12 Apr 2024

Abstract

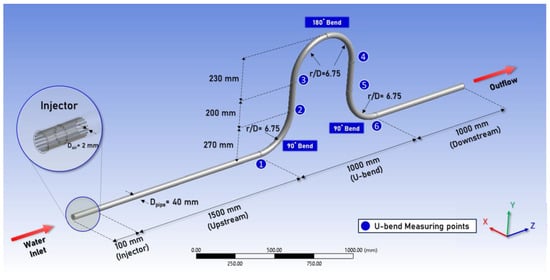

This study aims to provide insights into the intricate interactions between gas and liquid phases within flow components, which are pivotal in various industrial sectors such as nuclear reactors, oil and gas pipelines, and thermal management systems. Employing the Eulerian–Eulerian approach, our computational

[...] Read more.

This study aims to provide insights into the intricate interactions between gas and liquid phases within flow components, which are pivotal in various industrial sectors such as nuclear reactors, oil and gas pipelines, and thermal management systems. Employing the Eulerian–Eulerian approach, our computational model incorporates interphase relations, including drag and non-drag forces, to analyze phase distribution and velocities within a complex U-bend system. Comprising two horizontal-to-vertical bends and one vertical 180-degree elbow, the U-bend system’s behavior concerning bend geometry and airflow rates is scrutinized, highlighting their significant impact on multiphase flow dynamics. The study not only presents a detailed exposition of the numerical modeling techniques tailored for this complex geometry but also discusses the results obtained. Detailed analyses of local void fraction and phase velocities for each phase are provided. Furthermore, experimental validation enhances the reliability of our computational findings, with close agreement observed between computational and experimental results. Overall, the study underscores the efficacy of the Eulerian approach with interphase relations in capturing the complex behavior of the multiphase flow in U-bend systems, offering valuable insights for hydraulic system design and optimization in industrial applications.

Full article

(This article belongs to the Special Issue 10th Anniversary of Computation—Computational Heat and Mass Transfer (ICCHMT 2023))

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Axioms, Computation, MCA, Mathematics, Symmetry

Mathematical Modeling

Topic Editors: Babak Shiri, Zahra AlijaniDeadline: 31 May 2024

Topic in

Entropy, Algorithms, Computation, Fractal Fract

Computational Complex Networks

Topic Editors: Alexandre G. Evsukoff, Yilun ShangDeadline: 30 June 2024

Topic in

Applied Sciences, Computation, Entropy, J. Imaging

Color Image Processing: Models and Methods (CIP: MM)

Topic Editors: Giuliana Ramella, Isabella TorcicolloDeadline: 30 July 2024

Topic in

Algorithms, Computation, Mathematics, Molecules, Symmetry, Nanomaterials, Materials

Advances in Computational Materials Sciences

Topic Editors: Cuiying Jian, Aleksander CzekanskiDeadline: 30 September 2024

Conferences

6 October 2021–6 October 2031

15th International Conference on Practical Applications of Computational Biology & Bioinformatics

19–21 June 2024

First CEACM Int. Conference on Synergy between Multiphysics/Multiscale Modeling and Machine Learning

Special Issues

Special Issue in

Computation

Emerging Trends and Applications in High-Fidelity Computational Fluid Dynamics Simulation

Guest Editors: Anup Zope, Shanti BhushanDeadline: 15 May 2024

Special Issue in

Computation

Artificial Intelligence Applications in Public Health

Guest Editors: Dmytro Chumachenko, Sergiy YakovlevDeadline: 31 May 2024

Special Issue in

Computation

Signal Processing and Machine Learning in Data Science

Guest Editors: Maria Trigka, Elias DritsasDeadline: 30 June 2024

Special Issue in

Computation

Finite Element Methods with Applications in Civil and Mechanical Engineering

Guest Editors: Gavril Grebenisan, Alin Pop, Dan Claudiu NegrăuDeadline: 15 July 2024