Journal Description

Computers

Computers

is an international, scientific, peer-reviewed, open access journal of computer science, including computer and network architecture and computer–human interaction as its main foci, published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, Inspec, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Networks and Communications)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 17.7 days after submission; acceptance to publication is undertaken in 3.7 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

2.8 (2022);

5-Year Impact Factor:

2.6 (2022)

Latest Articles

Indoor Scene Classification through Dual-Stream Deep Learning: A Framework for Improved Scene Understanding in Robotics

Computers 2024, 13(5), 121; https://doi.org/10.3390/computers13050121 (registering DOI) - 14 May 2024

Abstract

Indoor scene classification plays a pivotal role in enabling social robots to seamlessly adapt to their environments, facilitating effective navigation and interaction within diverse indoor scenes. By accurately characterizing indoor scenes, robots can autonomously tailor their behaviors, making informed decisions to accomplish specific

[...] Read more.

Indoor scene classification plays a pivotal role in enabling social robots to seamlessly adapt to their environments, facilitating effective navigation and interaction within diverse indoor scenes. By accurately characterizing indoor scenes, robots can autonomously tailor their behaviors, making informed decisions to accomplish specific tasks. Traditional methods relying on manually crafted features encounter difficulties when characterizing complex indoor scenes. On the other hand, deep learning models address the shortcomings of traditional methods by autonomously learning hierarchical features from raw images. Despite the success of deep learning models, existing models still struggle to effectively characterize complex indoor scenes. This is because there is high degree of intra-class variability and inter-class similarity within indoor environments. To address this problem, we propose a dual-stream framework that harnesses both global contextual information and local features for enhanced recognition. The global stream captures high-level features and relationships across the scene. The local stream employs a fully convolutional network to extract fine-grained local information. The proposed dual-stream architecture effectively distinguishes scenes that share similar global contexts but contain different localized objects. We evaluate the performance of the proposed framework on a publicly available benchmark indoor scene dataset. From the experimental results, we demonstrate the effectiveness of the proposed framework.

Full article

(This article belongs to the Special Issue Recent Advances in Autonomous Vehicle Solutions)

►

Show Figures

Open AccessArticle

Enhancing Workplace Safety through Personalized Environmental Risk Assessment: An AI-Driven Approach in Industry 5.0

by

Janaína Lemos, Vanessa Borba de Souza, Frederico Soares Falcetta, Fernando Kude de Almeida, Tânia M. Lima and Pedro Dinis Gaspar

Computers 2024, 13(5), 120; https://doi.org/10.3390/computers13050120 - 13 May 2024

Abstract

►▼

Show Figures

This paper describes an integrated monitoring system designed for individualized environmental risk assessment and management in the workplace. The system incorporates monitoring devices that measure dust, noise, ultraviolet radiation, illuminance, temperature, humidity, and flammable gases. Comprising monitoring devices, a server-based web application for

[...] Read more.

This paper describes an integrated monitoring system designed for individualized environmental risk assessment and management in the workplace. The system incorporates monitoring devices that measure dust, noise, ultraviolet radiation, illuminance, temperature, humidity, and flammable gases. Comprising monitoring devices, a server-based web application for employers, and a mobile application for workers, the system integrates the registration of workers’ health histories, such as common diseases and symptoms related to the monitored agents, and a web-based recommendation system. The recommendation system application uses classifiers to decide the risk/no risk per sensor and crosses this information with fixed rules to define recommendations. The system generates actionable alerts for companies to improve decision-making regarding professional activities and long-term safety planning by analyzing health information through fixed rules and exposure data through machine learning algorithms. As the system must handle sensitive data, data privacy is addressed in communication and data storage. The study provides test results that evaluate the performance of different machine learning models in building an effective recommendation system. Since it was not possible to find public datasets with all the sensor data needed to train artificial intelligence models, it was necessary to build a data generator for this work. By proposing an approach that focuses on individualized environmental risk assessment and management, considering workers’ health histories, this work is expected to contribute to enhancing occupational safety through computational technologies in the Industry 5.0 approach.

Full article

Figure 1

Open AccessArticle

Detection of Crabs and Lobsters Using a Benchmark Single-Stage Detector and Novel Fisheries Dataset

by

Muhammad Iftikhar, Marie Neal, Natalie Hold, Sebastian Gregory Dal Toé and Bernard Tiddeman

Computers 2024, 13(5), 119; https://doi.org/10.3390/computers13050119 (registering DOI) - 11 May 2024

Abstract

Crabs and lobsters are valuable crustaceans that contribute enormously to the seafood needs of the growing human population. This paper presents a comprehensive analysis of single- and multi-stage object detectors for the detection of crabs and lobsters using images captured onboard fishing boats.

[...] Read more.

Crabs and lobsters are valuable crustaceans that contribute enormously to the seafood needs of the growing human population. This paper presents a comprehensive analysis of single- and multi-stage object detectors for the detection of crabs and lobsters using images captured onboard fishing boats. We investigate the speed and accuracy of multiple object detection techniques using a novel dataset, multiple backbone networks, various input sizes, and fine-tuned parameters. We extend our work to train lightweight models to accommodate the fishing boats equipped with low-power hardware systems. Firstly, we train Faster R-CNN, SSD, and YOLO with different backbones and tuning parameters. The models trained with higher input sizes resulted in lower frames per second (FPS) and vice versa. The base models were highly accurate but were compromised in computational and run-time costs. The lightweight models were adaptable to low-power hardware compared to the base models. Secondly, we improved the performance of YOLO (v3, v4, and tiny versions) using custom anchors generated by the k-means clustering approach using our novel dataset. The YOLO (v4 and it’s tiny version) achieved mean average precision (mAP) of 99.2% and 95.2%, respectively. The YOLOv4-tiny trained on the custom anchor-based dataset is capable of precisely detecting crabs and lobsters onboard fishing boats at 64 frames per second (FPS) on an NVidia GeForce RTX 3070 GPU. The Results obtained identified the strengths and weaknesses of each method towards a trade-off between speed and accuracy for detecting objects in input images.

Full article

(This article belongs to the Special Issue Selected Papers from Computer Graphics & Visual Computing (CGVC 2023))

►▼

Show Figures

Figure 1

Open AccessArticle

An Efficient Attribute-Based Participant Selecting Scheme with Blockchain for Federated Learning in Smart Cities

by

Xiaojun Yin, Haochen Qiu, Xijun Wu and Xinming Zhang

Computers 2024, 13(5), 118; https://doi.org/10.3390/computers13050118 - 9 May 2024

Abstract

In smart cities, large amounts of multi-source data are generated all the time. A model established via machine learning can mine information from these data and enable many valuable applications. With concerns about data privacy, it is becoming increasingly difficult for the publishers

[...] Read more.

In smart cities, large amounts of multi-source data are generated all the time. A model established via machine learning can mine information from these data and enable many valuable applications. With concerns about data privacy, it is becoming increasingly difficult for the publishers of these applications to obtain users’ data, which hinders the previous paradigm of centralized training through collecting data on a large scale. Federated learning is expected to prevent the leakage of private data by allowing users to train models locally. The existing works generally ignore architectures designed in real scenarios. Thus, there still exist some challenges that have not yet been explored in federated learning applied in smart cities, such as avoiding sharing models with improper parties under privacy requirements and designing satisfactory incentive mechanisms. Therefore, we propose an efficient attribute-based participant selecting scheme to ensure that only someone who meets the requirements of the task publisher can participate in training under the premise of high privacy requirements, so as to improve the efficiency and avoid attacks. We further extend our scheme to encourage clients to take part in federated learning and provide an audit mechanism using a consortium blockchain. Finally, we present an in-depth discussion of the proposed scheme by comparing it to different methods. The results show that our scheme can improve the efficiency of federated learning by enabling reliable participant selection and promote the extensive use of federated learning in smart cities.

Full article

(This article belongs to the Special Issue Blockchain Technology—a Breakthrough Innovation for Modern Industries)

►▼

Show Figures

Figure 1

Open AccessSystematic Review

A Systematic Review of Using Deep Learning in Aphasia: Challenges and Future Directions

by

Yin Wang, Weibin Cheng, Fahim Sufi, Qiang Fang and Seedahmed S. Mahmoud

Computers 2024, 13(5), 117; https://doi.org/10.3390/computers13050117 - 9 May 2024

Abstract

In this systematic literature review, the intersection of deep learning applications within the aphasia domain is meticulously explored, acknowledging the condition’s complex nature and the nuanced challenges it presents for language comprehension and expression. By harnessing data from primary databases and employing advanced

[...] Read more.

In this systematic literature review, the intersection of deep learning applications within the aphasia domain is meticulously explored, acknowledging the condition’s complex nature and the nuanced challenges it presents for language comprehension and expression. By harnessing data from primary databases and employing advanced query methodologies, this study synthesizes findings from 28 relevant documents, unveiling a landscape marked by significant advancements and persistent challenges. Through a methodological lens grounded in the PRISMA framework (Version 2020) and Machine Learning-driven tools like VosViewer (Version 1.6.20) and Litmaps (Free Version), the research delineates the high variability in speech patterns, the intricacies of speech recognition, and the hurdles posed by limited and diverse datasets as core obstacles. Innovative solutions such as specialized deep learning models, data augmentation strategies, and the pivotal role of interdisciplinary collaboration in dataset annotation emerge as vital contributions to this field. The analysis culminates in identifying theoretical and practical pathways for surmounting these barriers, highlighting the potential of deep learning technologies to revolutionize aphasia assessment and treatment. This review not only consolidates current knowledge but also charts a course for future research, emphasizing the need for comprehensive datasets, model optimization, and integration into clinical workflows to enhance patient care. Ultimately, this work underscores the transformative power of deep learning in advancing aphasia diagnosis, treatment, and support, heralding a new era of innovation and interdisciplinary collaboration in addressing this challenging disorder.

Full article

(This article belongs to the Special Issue Machine and Deep Learning in the Health Domain 2024)

►▼

Show Figures

Figure 1

Open AccessArticle

A Hybrid Deep Learning Architecture for Apple Foliar Disease Detection

by

Adnane Ait Nasser and Moulay A. Akhloufi

Computers 2024, 13(5), 116; https://doi.org/10.3390/computers13050116 - 7 May 2024

Abstract

Incorrectly diagnosing plant diseases can lead to various undesirable outcomes. This includes the potential for the misuse of unsuitable herbicides, resulting in harm to both plants and the environment. Examining plant diseases visually is a complex and challenging procedure that demands considerable time

[...] Read more.

Incorrectly diagnosing plant diseases can lead to various undesirable outcomes. This includes the potential for the misuse of unsuitable herbicides, resulting in harm to both plants and the environment. Examining plant diseases visually is a complex and challenging procedure that demands considerable time and resources. Moreover, it necessitates keen observational skills from agronomists and plant pathologists. Precise identification of plant diseases is crucial to enhance crop yields, ultimately guaranteeing the quality and quantity of production. The latest progress in deep learning (DL) models has demonstrated encouraging outcomes in the identification and classification of plant diseases. In the context of this study, we introduce a novel hybrid deep learning architecture named “CTPlantNet”. This architecture employs convolutional neural network (CNN) models and a vision transformer model to efficiently classify plant foliar diseases, contributing to the advancement of disease classification methods in the field of plant pathology research. This study utilizes two open-access datasets. The first one is the Plant Pathology 2020-FGVC-7 dataset, comprising a total of 3526 images depicting apple leaves and divided into four distinct classes: healthy, scab, rust, and multiple. The second dataset is Plant Pathology 2021-FGVC-8, containing 18,632 images classified into six categories: healthy, scab, rust, powdery mildew, frog eye spot, and complex. The proposed architecture demonstrated remarkable performance across both datasets, outperforming state-of-the-art models with an accuracy (ACC) of 98.28% for Plant Pathology 2020-FGVC-7 and 95.96% for Plant Pathology 2021-FGVC-8.

Full article

(This article belongs to the Special Issue Harnessing Artificial Intelligence for Social and Semantic Understanding)

►▼

Show Figures

Figure 1

Open AccessArticle

Performance Comparison of CFD Microbenchmarks on Diverse HPC Architectures

by

Flavio C. C. Galeazzo, Marta Garcia-Gasulla, Elisabetta Boella, Josep Pocurull, Sergey Lesnik, Henrik Rusche, Simone Bnà, Matteo Cerminara, Federico Brogi, Filippo Marchetti, Daniele Gregori, R. Gregor Weiß and Andreas Ruopp

Computers 2024, 13(5), 115; https://doi.org/10.3390/computers13050115 - 7 May 2024

Abstract

OpenFOAM is a CFD software widely used in both industry and academia. The exaFOAM project aims at enhancing the HPC scalability of OpenFOAM, while identifying its current bottlenecks and proposing ways to overcome them. For the assessment of the software components and the

[...] Read more.

OpenFOAM is a CFD software widely used in both industry and academia. The exaFOAM project aims at enhancing the HPC scalability of OpenFOAM, while identifying its current bottlenecks and proposing ways to overcome them. For the assessment of the software components and the code profiling during the code development, lightweight but significant benchmarks should be used. The answer was to develop microbenchmarks, with a small memory footprint and short runtime. The name microbenchmark does not mean that they have been prepared to be the smallest possible test cases, as they have been developed to fit in a compute node, which usually has dozens of compute cores. The microbenchmarks cover a broad band of applications: incompressible and compressible flow, combustion, viscoelastic flow and adjoint optimization. All benchmarks are part of the OpenFOAM HPC Technical Committee repository and are fully accessible. The performance using HPC systems with Intel and AMD processors (x86_64 architecture) and Arm processors (aarch64 architecture) have been benchmarked. For the workloads in this study, the mean performance with the AMD CPU is 62% higher than with Arm and 42% higher than with Intel. The AMD processor seems particularly suited resulting in an overall shorter time-to-solution.

Full article

(This article belongs to the Special Issue Best Practices, Challenges and Opportunities in Software Engineering)

►▼

Show Figures

Figure 1

Open AccessArticle

Harnessing Machine Learning to Unveil Emotional Responses to Hateful Content on Social Media

by

Ali Louati, Hassen Louati, Abdullah Albanyan, Rahma Lahyani, Elham Kariri and Abdulrahman Alabduljabbar

Computers 2024, 13(5), 114; https://doi.org/10.3390/computers13050114 - 29 Apr 2024

Abstract

Within the dynamic realm of social media, the proliferation of harmful content can significantly influence user engagement and emotional health. This study presents an in-depth analysis that bridges diverse domains, from examining the aftereffects of personal online attacks to the intricacies of online

[...] Read more.

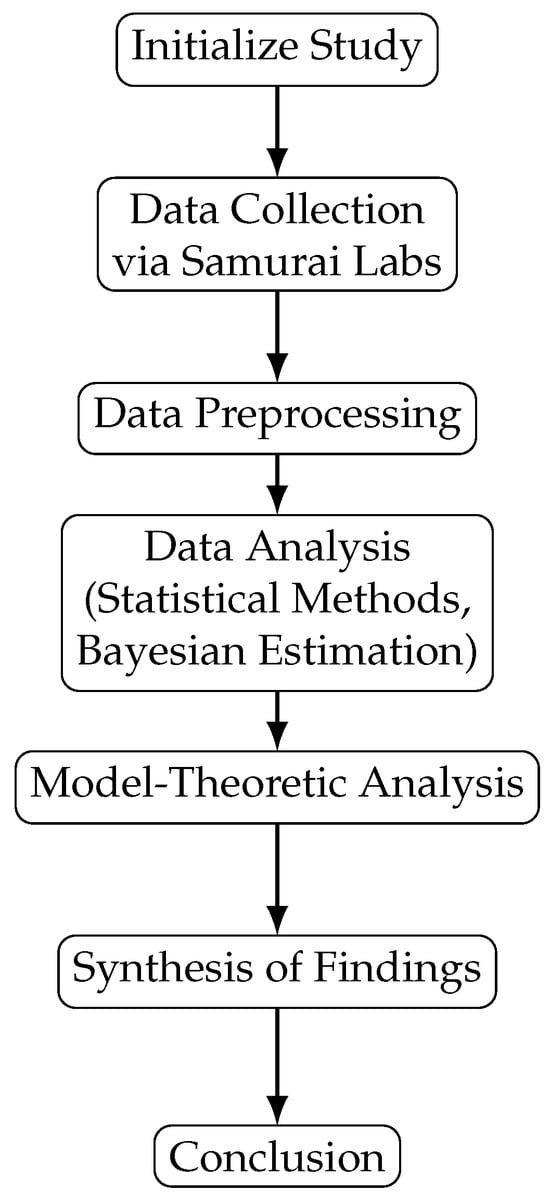

Within the dynamic realm of social media, the proliferation of harmful content can significantly influence user engagement and emotional health. This study presents an in-depth analysis that bridges diverse domains, from examining the aftereffects of personal online attacks to the intricacies of online trolling. By leveraging an AI-driven framework, we systematically implemented high-precision attack detection, psycholinguistic feature extraction, and sentiment analysis algorithms, each tailored to the unique linguistic contexts found within user-generated content on platforms like Reddit. Our dataset, which spans a comprehensive spectrum of social media interactions, underwent rigorous analysis employing classical statistical methods, Bayesian estimation, and model-theoretic analysis. This multi-pronged methodological approach allowed us to chart the complex emotional responses of users subjected to online negativity, covering a spectrum from harassment and cyberbullying to subtle forms of trolling. Empirical results from our study reveal a clear dose–response effect; personal attacks are quantifiably linked to declines in user activity, with our data indicating a 5% reduction after 1–2 attacks, 15% after 3–5 attacks, and 25% after 6–10 attacks, demonstrating the significant deterring effect of such negative encounters. Moreover, sentiment analysis unveiled the intricate emotional reactions users have to these interactions, further emphasizing the potential for AI-driven methodologies to promote more inclusive and supportive digital communities. This research underscores the critical need for interdisciplinary approaches in understanding social media’s complex dynamics and sheds light on significant insights relevant to the development of regulation policies, the formation of community guidelines, and the creation of AI tools tailored to detect and counteract harmful content. The goal is to mitigate the impact of such content on user emotions and ensure the healthy engagement of users in online spaces.

Full article

(This article belongs to the Special Issue Harnessing Artificial Intelligence for Social and Semantic Understanding)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhancing Reliability in Rural Networks Using a Software-Defined Wide Area Network

by

Luca Borgianni, Davide Adami, Stefano Giordano and Michele Pagano

Computers 2024, 13(5), 113; https://doi.org/10.3390/computers13050113 - 28 Apr 2024

Abstract

Due to limited infrastructure and remote locations, rural areas often need help providing reliable and high-quality network connectivity. We propose an innovative approach that leverages Software-Defined Wide Area Network (SD-WAN) architecture to enhance reliability in such challenging rural scenarios. Our study focuses on

[...] Read more.

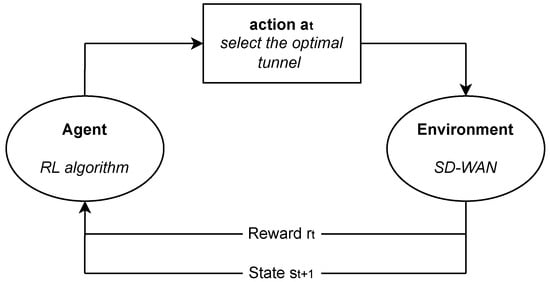

Due to limited infrastructure and remote locations, rural areas often need help providing reliable and high-quality network connectivity. We propose an innovative approach that leverages Software-Defined Wide Area Network (SD-WAN) architecture to enhance reliability in such challenging rural scenarios. Our study focuses on cases in which network resources are limited to network solutions such as Long-Term Evolution (LTE) and a Low-Earth-Orbit satellite connection. The SD-WAN implementation compares three tunnel selection algorithms that leverage real-time network performance monitoring: Deterministic, Random, and Deep Q-learning. The results offer valuable insights into the practical implementation of SD-WAN for rural connectivity scenarios, showing its potential to bridge the digital divide in underserved areas.

Full article

(This article belongs to the Special Issue Emerging Trends and Challenges of Software-Defined Networking (SDN) Technologies)

►▼

Show Figures

Figure 1

Open AccessArticle

Fruit and Vegetables Blockchain-Based Traceability Platform

by

Ricardo Morais, António Miguel Rosado da Cruz and Estrela Ferreira Cruz

Computers 2024, 13(5), 112; https://doi.org/10.3390/computers13050112 - 26 Apr 2024

Abstract

Fresh food is difficult to preserve, especially because its characteristics can change, and its nutritional value may decrease. Therefore, from the consumer’s point of view, it would be very useful if, when buying fresh fruit or vegetables, they could know where it has

[...] Read more.

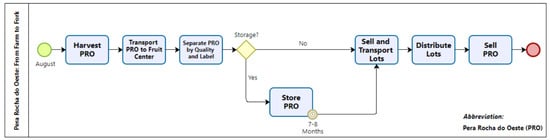

Fresh food is difficult to preserve, especially because its characteristics can change, and its nutritional value may decrease. Therefore, from the consumer’s point of view, it would be very useful if, when buying fresh fruit or vegetables, they could know where it has been cultivated, when it was harvested and everything that has happened from its harvest until it reached the supermarket shelf. In other words, the consumer would like to have information about the traceability of the fruit or vegetables they intend to buy. This article presents a blockchain-based platform that allows institutions, consumers and business partners to track, back and forward, quality and sustainability information about all types of fresh fruits and vegetables.

Full article

(This article belongs to the Special Issue Selected Papers from 18th Iberian Conference on Information Systems and Technologies (CISTI'2023))

►▼

Show Figures

Figure 1

Open AccessArticle

Applying Bounding Techniques on Grammatical Evolution

by

Ioannis G. Tsoulos, Alexandros Tzallas and Evangelos Karvounis

Computers 2024, 13(5), 111; https://doi.org/10.3390/computers13050111 - 23 Apr 2024

Abstract

The Grammatical Evolution technique has been successfully applied to some datasets from various scientific fields. However, in Grammatical Evolution, the chromosomes can be initialized at wide value intervals, which can lead to a decrease in the efficiency of the underlying technique. In this

[...] Read more.

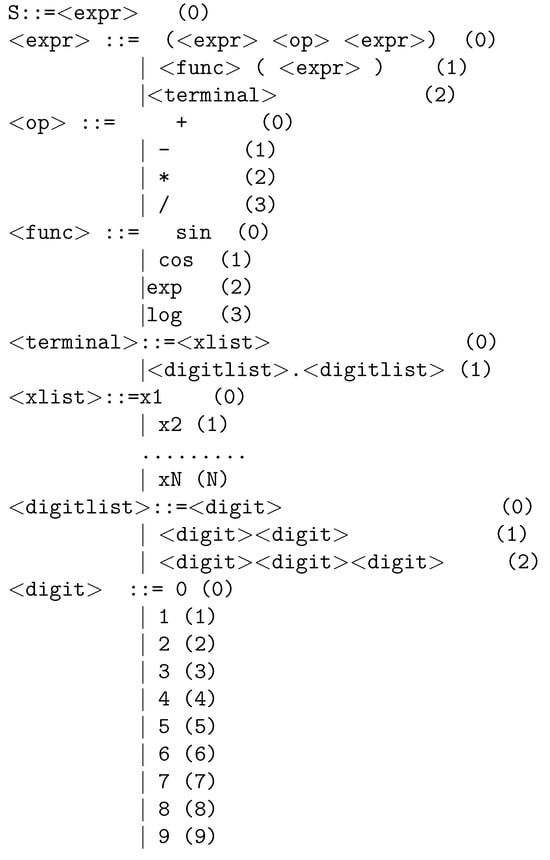

The Grammatical Evolution technique has been successfully applied to some datasets from various scientific fields. However, in Grammatical Evolution, the chromosomes can be initialized at wide value intervals, which can lead to a decrease in the efficiency of the underlying technique. In this paper, a technique for discovering appropriate intervals for the initialization of chromosomes is proposed using partition rules guided by a genetic algorithm. This method has been applied to feature construction techniques used in a variety of scientific papers. After successfully finding a promising interval, the feature construction technique is applied and the chromosomes are initialized within that interval. This technique was applied to a number of known problems in the relevant literature, and the results are extremely promising.

Full article

(This article belongs to the Special Issue Feature Papers in Computers 2024)

►▼

Show Figures

Figure 1

Open AccessArticle

User Experience in Neurofeedback Applications Using AR as Feedback Modality

by

Lisa Maria Berger, Guilherme Wood and Silvia Erika Kober

Computers 2024, 13(5), 110; https://doi.org/10.3390/computers13050110 - 23 Apr 2024

Abstract

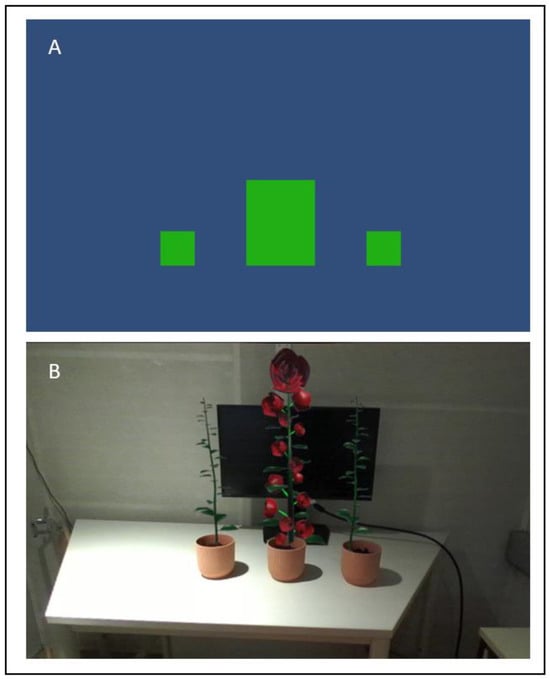

Neurofeedback (NF) is a brain–computer interface in which users can learn to modulate their own brain activation while receiving real-time feedback thereof. To increase motivation and adherence to training, virtual reality has recently been used as a feedback modality. In the presented study,

[...] Read more.

Neurofeedback (NF) is a brain–computer interface in which users can learn to modulate their own brain activation while receiving real-time feedback thereof. To increase motivation and adherence to training, virtual reality has recently been used as a feedback modality. In the presented study, we focused on the effects of augmented reality (AR) based visual feedback on subjective user experience, including positive/negative affect, cybersickness, flow experience, and experience with the use of this technology, and compared it with a traditional 2D feedback modality. Also, half of the participants got real feedback and the other half got sham feedback. All participants performed one NF training session, in which they tried to increase their sensorimotor rhythm (SMR, 12–15 Hz) over central brain areas. Forty-four participants received conventional 2D visual feedback (moving bars on a conventional computer screen) about real-time changes in SMR activity, while 45 participants received AR feedback (3D virtual flowers grew out of a real pot). The subjective user experience differed in several points between the groups. Participants from the AR group received a tendentially higher flow score, and the AR sham group perceived a tendentially higher feeling of flow than the 2D sham group. Further, participants from the AR group reported a higher technology usability, experienced a higher feeling of control, and perceived themselves as more successful than those from the 2D group. Psychological factors like this are crucial for NF training motivation and success. In the 2D group, participants reported more concern related to their performance, a tendentially higher technology anxiety, and also more physical discomfort. These results show the potential advantage of the use of AR-based feedback in NF applications over traditional feedback modalities.

Full article

(This article belongs to the Special Issue Extended or Mixed Reality (AR + VR): Technology and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

A Novel Hybrid Vision Transformer CNN for COVID-19 Detection from ECG Images

by

Mohamed Rami Naidji and Zakaria Elberrichi

Computers 2024, 13(5), 109; https://doi.org/10.3390/computers13050109 - 23 Apr 2024

Abstract

►▼

Show Figures

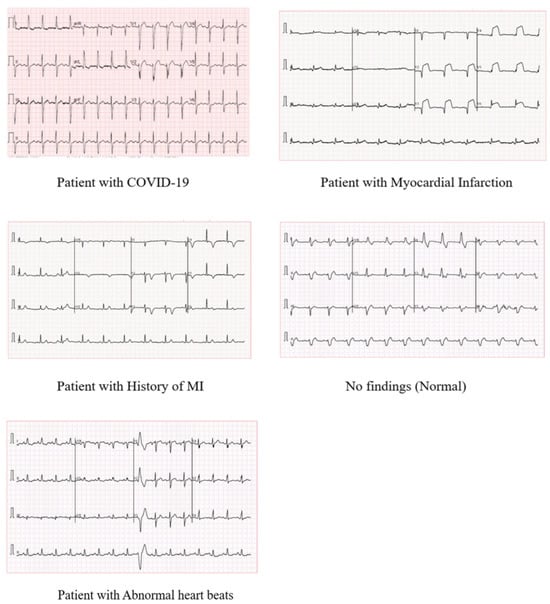

The emergence of the novel coronavirus in Wuhan, China since 2019, has put the world in an exotic state of emergency and affected millions of lives. It is five times more deadly than Influenza and causes significant morbidity and mortality. COVID-19 mainly affects

[...] Read more.

The emergence of the novel coronavirus in Wuhan, China since 2019, has put the world in an exotic state of emergency and affected millions of lives. It is five times more deadly than Influenza and causes significant morbidity and mortality. COVID-19 mainly affects the pulmonary system leading to respiratory disorders. However, earlier studies indicated that COVID-19 infection may cause cardiovascular diseases, which can be detected using an electrocardiogram (ECG). This work introduces an advanced deep learning architecture for the automatic detection of COVID-19 and heart diseases from ECG images. In particular, a hybrid combination of the EfficientNet-B0 CNN model and Vision Transformer is adopted in the proposed architecture. To our knowledge, this study is the first research endeavor to investigate the potential of the vision transformer model to identify COVID-19 in ECG data. We carry out two classification schemes, a binary classification to identify COVID-19 cases, and a multi-class classification, to differentiate COVID-19 cases from normal cases and other cardiovascular diseases. The proposed method surpasses existing state-of-the-art approaches, demonstrating an accuracy of 100% and 95.10% for binary and multiclass levels, respectively. These results prove that artificial intelligence can potentially be used to detect cardiovascular anomalies caused by COVID-19, which may help clinicians overcome the limitations of traditional diagnosis.

Full article

Figure 1

Open AccessArticle

Performance Evaluation and Analysis of Urban-Suburban 5G Cellular Networks

by

Aymen I. Zreikat and Shinu Mathew

Computers 2024, 13(4), 108; https://doi.org/10.3390/computers13040108 - 22 Apr 2024

Abstract

►▼

Show Figures

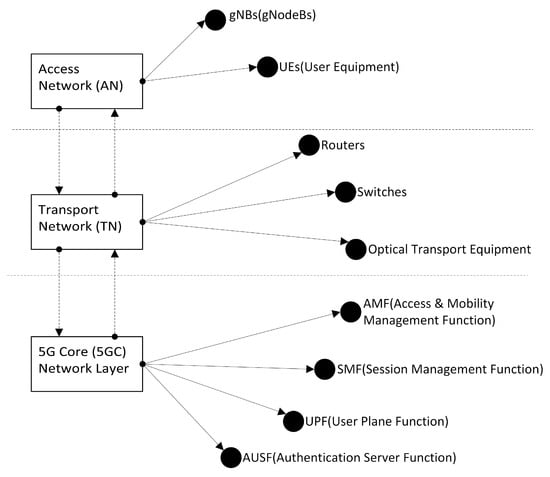

5G is the fifth-generation technology standard for the new generation of cellular networks. Combining 5G and millimeter waves (mmWave) gives tremendous capacity and even lower latency, allowing you to fully enjoy the 5G experience. 5G is the successor to the fourth generation (4G)

[...] Read more.

5G is the fifth-generation technology standard for the new generation of cellular networks. Combining 5G and millimeter waves (mmWave) gives tremendous capacity and even lower latency, allowing you to fully enjoy the 5G experience. 5G is the successor to the fourth generation (4G) which provides high-speed networks to support traffic capacity, higher throughput, and network efficiency as well as supporting massive applications, especially internet-of-things (IoT) and machine-to-machine areas. Therefore, performance evaluation and analysis of such systems is a critical research task that needs to be conducted by researchers. In this paper, a new model structure of an urban-suburban environment in a 5G network formed of seven cells with a central urban cell (Hot spot) surrounded by six suburban cells is introduced. With the proposed model, the end-user can have continuous connectivity under different propagation environments. Based on the suggested model, the related capacity bounds are derived and the performance of 5G network is studied via a simulation considering different parameters that affect the performance such as the non-orthogonality factor, the load concentration in both urban and suburban areas, the height of the mobile, the height of the base station, the radius, and the distance between base stations. Blocking probability and bandwidth utilization are the main two performance measures that are studied, however, the effect of the above parameters on the system capacity is also introduced. The provided numerical results that are based on a network-level call admission control algorithm reveal the fact that the investigated parameters have a major influence on the network performance. Therefore, the outcome of this research can be a very useful tool to be considered by mobile operators in the network planning of 5G.

Full article

Figure 1

Open AccessArticle

Blockchain Integration and Its Impact on Renewable Energy

by

Hamed Taherdoost

Computers 2024, 13(4), 107; https://doi.org/10.3390/computers13040107 - 22 Apr 2024

Abstract

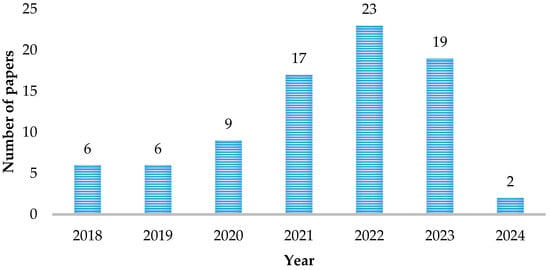

This paper investigates the evolving landscape of blockchain technology in renewable energy. The study, based on a Scopus database search on 21 February 2024, reveals a growing trend in scholarly output, predominantly in engineering, energy, and computer science. The diverse range of source

[...] Read more.

This paper investigates the evolving landscape of blockchain technology in renewable energy. The study, based on a Scopus database search on 21 February 2024, reveals a growing trend in scholarly output, predominantly in engineering, energy, and computer science. The diverse range of source types and global contributions, led by China, reflects the interdisciplinary nature of this field. This comprehensive review delves into 33 research papers, examining the integration of blockchain in renewable energy systems, encompassing decentralized power dispatching, certificate trading, alternative energy selection, and management in applications like intelligent transportation systems and microgrids. The papers employ theoretical concepts such as decentralized power dispatching models and permissioned blockchains, utilizing methodologies involving advanced algorithms, consensus mechanisms, and smart contracts to enhance efficiency, security, and transparency. The findings suggest that blockchain integration can reduce costs, increase renewable source utilization, and optimize energy management. Despite these advantages, challenges including uncertainties, privacy concerns, scalability issues, and energy consumption are identified, alongside legal and regulatory compliance and market acceptance hurdles. Overcoming resistance to change and building trust in blockchain-based systems are crucial for successful adoption, emphasizing the need for collaborative efforts among industry stakeholders, regulators, and technology developers to unlock the full potential of blockchains in renewable energy integration.

Full article

(This article belongs to the Special Issue Blockchain Technology—a Breakthrough Innovation for Modern Industries)

►▼

Show Figures

Figure 1

Open AccessArticle

Cognitive Classifier of Hand Gesture Images for Automated Sign Language Recognition: Soft Robot Assistance Based on Neutrosophic Markov Chain Paradigm

by

Muslem Al-Saidi, Áron Ballagi, Oday Ali Hassen and Saad M. Saad

Computers 2024, 13(4), 106; https://doi.org/10.3390/computers13040106 - 22 Apr 2024

Abstract

In recent years, Sign Language Recognition (SLR) has become an additional topic of discussion in the human–computer interface (HCI) field. The most significant difficulty confronting SLR recognition is finding algorithms that will scale effectively with a growing vocabulary size and a limited supply

[...] Read more.

In recent years, Sign Language Recognition (SLR) has become an additional topic of discussion in the human–computer interface (HCI) field. The most significant difficulty confronting SLR recognition is finding algorithms that will scale effectively with a growing vocabulary size and a limited supply of training data for signer-independent applications. Due to its sensitivity to shape information, automated SLR based on hidden Markov models (HMMs) cannot characterize the confusing distributions of the observations in gesture features with sufficiently precise parameters. In order to simulate uncertainty in hypothesis spaces, many scholars provide an extension of the HMMs, utilizing higher-order fuzzy sets to generate interval-type-2 fuzzy HMMs. This expansion is helpful because it brings the uncertainty and fuzziness of conventional HMM mapping under control. The neutrosophic sets are used in this work to deal with indeterminacy in a practical SLR setting. Existing interval-type-2 fuzzy HMMs cannot consider uncertain information that includes indeterminacy. However, the neutrosophic hidden Markov model successfully identifies the best route between states when there is vagueness. This expansion is helpful because it brings the uncertainty and fuzziness of conventional HMM mapping under control. The neutrosophic three membership functions (truth, indeterminate, and falsity grades) provide more layers of autonomy for assessing HMM’s uncertainty. This approach could be helpful for an extensive vocabulary and hence seeks to solve the scalability issue. In addition, it may function independently of the signer, without needing data gloves or any other input devices. The experimental results demonstrate that the neutrosophic HMM is nearly as computationally difficult as the fuzzy HMM but has a similar performance and is more robust to gesture variations.

Full article

(This article belongs to the Special Issue Uncertainty-Aware Artificial Intelligence)

►▼

Show Figures

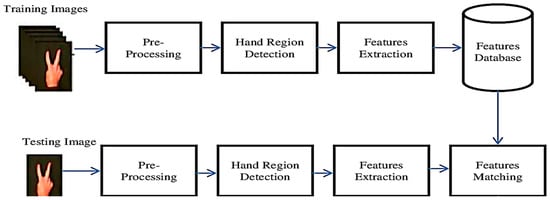

Figure 1

Open AccessReview

Smart Healthcare System in Server-Less Environment: Concepts, Architecture, Challenges, Future Directions

by

Rup Kumar Deka, Akash Ghosh, Sandeep Nanda, Rabindra Kumar Barik and Manob Jyoti Saikia

Computers 2024, 13(4), 105; https://doi.org/10.3390/computers13040105 - 19 Apr 2024

Abstract

►▼

Show Figures

Server-less computing is a novel cloud-based paradigm that is gaining popularity today for running widely distributed applications. When it comes to server-less computing, features are available via subscription. Server-less computing is advantageous to developers since it lets them install and run programs without

[...] Read more.

Server-less computing is a novel cloud-based paradigm that is gaining popularity today for running widely distributed applications. When it comes to server-less computing, features are available via subscription. Server-less computing is advantageous to developers since it lets them install and run programs without worrying about the underlying architecture. A common choice for code deployment these days, server-less design is preferred because of its independence, affordability, and simplicity. The healthcare industry is one excellent setting in which server-less computing can shine. In the existing literature, we can see that fewer studies have been put forward or explored in the area of server-less computing with respect to smart healthcare systems. A cloud infrastructure can help deliver services to both users and healthcare providers. The main aim of our research is to cover various topics on the implementation of server-less computing in the current healthcare sector. We have carried out our studies, which are adopted in the healthcare domain and reported on an in-depth analysis in this article. We have listed various issues and challenges, and various recommendations to adopt server-less computing in the healthcare sector.

Full article

Figure 1

Open AccessArticle

Using Privacy-Preserving Algorithms and Blockchain Tokens to Monetize Industrial Data in Digital Marketplaces

by

Borja Bordel Sánchez, Ramón Alcarria, Latif Ladid and Aurel Machalek

Computers 2024, 13(4), 104; https://doi.org/10.3390/computers13040104 - 18 Apr 2024

Abstract

The data economy has arisen in most developed countries. Instruments and tools to extract knowledge and value from large collections of data are now available and enable new industries, business models, and jobs. However, the current data market is asymmetric and prevents companies

[...] Read more.

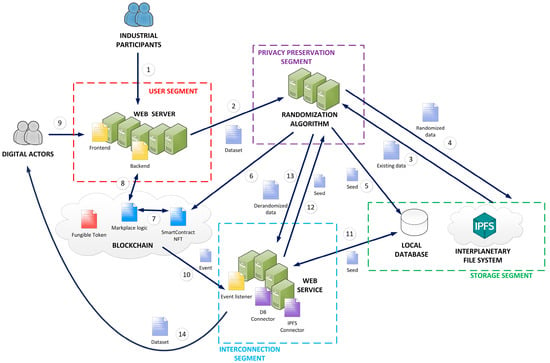

The data economy has arisen in most developed countries. Instruments and tools to extract knowledge and value from large collections of data are now available and enable new industries, business models, and jobs. However, the current data market is asymmetric and prevents companies from competing fairly. On the one hand, only very specialized digital organizations can manage complex data technologies such as Artificial Intelligence and obtain great benefits from third-party data at a very reduced cost. On the other hand, datasets are produced by regular companies as valueless sub-products that assume great costs. These companies have no mechanisms to negotiate a fair distribution of the benefits derived from their industrial data, which are often transferred for free. Therefore, new digital data-driven marketplaces must be enabled to facilitate fair data trading among all industrial agents. In this paper, we propose a blockchain-enabled solution to monetize industrial data. Industries can upload their data to an Inter-Planetary File System (IPFS) using a web interface, where the data are randomized through a privacy-preserving algorithm. In parallel, a blockchain network creates a Non-Fungible Token (NFT) to represent the dataset. So, only the NFT owner can obtain the required seed to derandomize and extract all data from the IPFS. Data trading is then represented by NFT trading and is based on fungible tokens, so it is easier to adapt prices to the real economy. Auctions and purchases are also managed through a common web interface. Experimental validation based on a pilot deployment is conducted. The results show a significant improvement in the data transactions and quality of experience of industrial agents.

Full article

(This article belongs to the Special Issue Selected Papers from 18th Iberian Conference on Information Systems and Technologies (CISTI'2023))

►▼

Show Figures

Figure 1

Open AccessArticle

Continuous Authentication in the Digital Age: An Analysis of Reinforcement Learning and Behavioral Biometrics

by

Priya Bansal and Abdelkader Ouda

Computers 2024, 13(4), 103; https://doi.org/10.3390/computers13040103 - 18 Apr 2024

Abstract

This research article delves into the development of a reinforcement learning (RL)-based continuous authentication system utilizing behavioral biometrics for user identification on computing devices. Keystroke dynamics are employed to capture unique behavioral biometric signatures, while a reward-driven RL model is deployed to authenticate

[...] Read more.

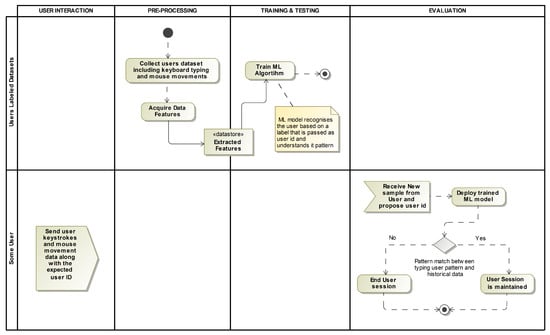

This research article delves into the development of a reinforcement learning (RL)-based continuous authentication system utilizing behavioral biometrics for user identification on computing devices. Keystroke dynamics are employed to capture unique behavioral biometric signatures, while a reward-driven RL model is deployed to authenticate users throughout their sessions. The proposed system augments conventional authentication mechanisms, fortifying them with an additional layer of security to create a robust continuous authentication framework compatible with static authentication systems. The methodology entails training an RL model to discern atypical user typing patterns and identify potentially suspicious activities. Each user’s historical data are utilized to train an agent, which undergoes preprocessing to generate episodes for learning purposes. The environment involves the retrieval of observations, which are intentionally perturbed to facilitate learning of nonlinear behaviors. The observation vector encompasses both ongoing and summarized features. A binary and minimalist reward function is employed, with principal component analysis (PCA) utilized for encoding ongoing features, and the double deep Q-network (DDQN) algorithm implemented through a fully connected neural network serving as the policy net. Evaluation results showcase training accuracy and equal error rate (EER) ranging from 94.7% to 100% and 0 to 0.0126, respectively, while test accuracy and EER fall within the range of approximately 81.06% to 93.5% and 0.0323 to 0.11, respectively, for all users as encoder features increase in number. These outcomes are achieved through RL’s iterative refinement of rewards via trial and error, leading to enhanced accuracy over time as more data are processed and incorporated into the system.

Full article

(This article belongs to the Special Issue Innovative Authentication Methods)

►▼

Show Figures

Figure 1

Open AccessArticle

A Holistic Approach to Use Educational Robots for Supporting Computer Science Courses

by

Zhumaniyaz Mamatnabiyev, Christos Chronis, Iraklis Varlamis, Yassine Himeur and Meirambek Zhaparov

Computers 2024, 13(4), 102; https://doi.org/10.3390/computers13040102 - 17 Apr 2024

Abstract

Robots are intelligent machines that are capable of autonomously performing intricate sequences of actions, with their functionality being primarily driven by computer programs and machine learning models. Educational robots are specifically designed and used for teaching and learning purposes and attain the interest

[...] Read more.

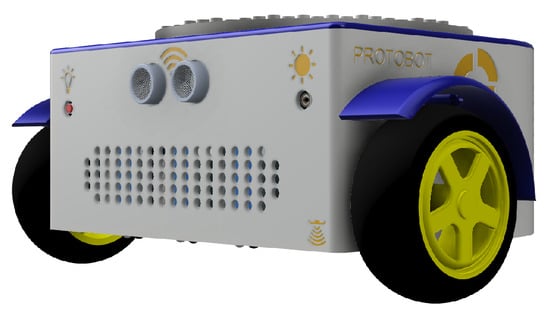

Robots are intelligent machines that are capable of autonomously performing intricate sequences of actions, with their functionality being primarily driven by computer programs and machine learning models. Educational robots are specifically designed and used for teaching and learning purposes and attain the interest of learners in gaining knowledge about science, technology, engineering, arts, and mathematics. Educational robots are widely applied in different fields of primary and secondary education, but their usage in teaching higher education subjects is limited. Even when educational robots are used in tertiary education, the use is sporadic, targets specific courses or subjects, and employs robots with narrow applicability. In this work, we propose a holistic approach to the use of educational robots in tertiary education. We demonstrate how an open source educational robot can be used by colleges, and universities in teaching multiple courses of a computer science curriculum, fostering computational and creative thinking in practice. We rely on an open-source and open design educational robot, called FOSSBot, which contains various IoT technologies for measuring data, processing it, and interacting with the physical world. Grace to its open nature, FOSSBot can be used in preparing the content and supporting learning activities for different subjects such as electronics, computer networks, artificial intelligence, computer vision, etc. To support our claim, we describe a computer science curriculum containing a wide range of computer science courses and explain how each course can be supported by providing indicative activities. The proposed one-year curriculum can be delivered at the postgraduate level, allowing computer science graduates to delve deep into Computer Science subjects. After examining related works that propose the use of robots in academic curricula we detect the gap that still exists for a curriculum that is linked to an educational robot and we present in detail each proposed course, the software libraries that can be employed for each course and the possible extensions to the open robot that will allow to further extend the curriculum with more topics or enhance it with activities. With our work, we show that by incorporating educational robots in higher education we can address this gap and provide a new ledger for boosting tertiary education.

Full article

(This article belongs to the Special Issue Feature Papers in Computers 2024)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Applied Sciences, Computers, Digital, Electronics, Smart Cities

Artificial Intelligence Models, Tools and Applications

Topic Editors: Phivos Mylonas, Katia Lida Kermanidis, Manolis MaragoudakisDeadline: 31 August 2024

Topic in

Biomedicines, Computers, Information, IJERPH, JPM

eHealth and mHealth: Challenges and Prospects, 2nd Volume

Topic Editors: Antonis Billis, Manuel Dominguez-Morales, Anton CivitDeadline: 30 September 2024

Topic in

Applied Sciences, Computers, Electronics, JSAN, Technologies

Emerging AI+X Technologies including Selected Papers from ICGHIT 2024

Topic Editors: Byung-Seo Kim, Hyunsik Ahn, Kyu-Tae LeeDeadline: 31 October 2024

Topic in

Computers, Informatics, Information, Logistics, Mathematics, Algorithms

Decision Science Applications and Models (DSAM)

Topic Editors: Daniel Riera Terrén, Angel A. Juan, Majsa Ammuriova, Laura CalvetDeadline: 31 December 2024

Conferences

Special Issues

Special Issue in

Computers

Information Technology: New Generations (ITNG 2023 and 2024)

Guest Editor: Shahram LatifiDeadline: 15 May 2024

Special Issue in

Computers

Machine and Deep Learning in the Health Domain 2024

Guest Editor: Hersh Sagreiya SagreiyaDeadline: 20 June 2024

Special Issue in

Computers

Game-Based Learning, Gamification in Education and Serious Games 2023

Guest Editors: Ricardo Baptista, Carlos Vaz de Carvalho, Hariklia TsalapatasDeadline: 30 June 2024

Special Issue in

Computers

Xtended or Mixed Reality (AR+VR) for Education 2024

Guest Editors: Veronica Rossano, Michele FiorentinoDeadline: 1 August 2024